Hi folks,

I’ve been working on some new metrics so we can evaluate how well different survey strategies perform for microlensing events and tidal disruption events. Both of these metrics distribute a sample of 10,000 events on the sky over 10 years, then we see how many of them would be detected by a given LSST cadence simulation.

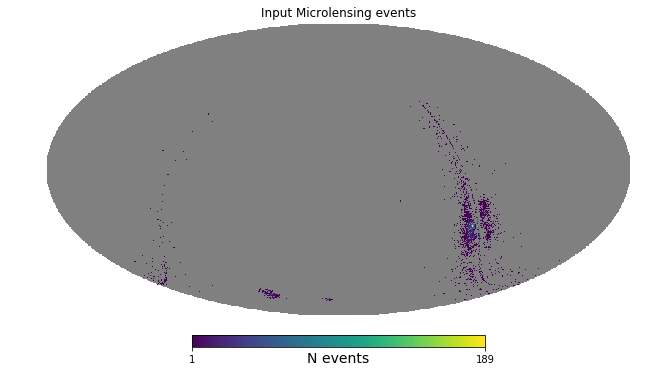

For microlensing, we assume events are distributed as stellar density squared, and draw from a distribution of possible impact parameters and event duration.

So the input looks like this:

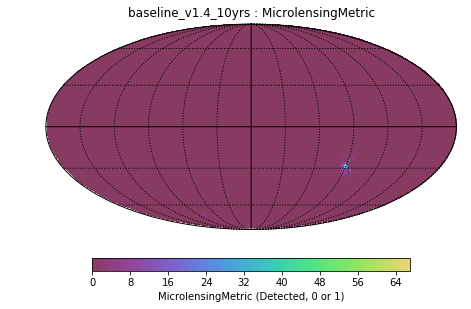

And we recover 16% of them:

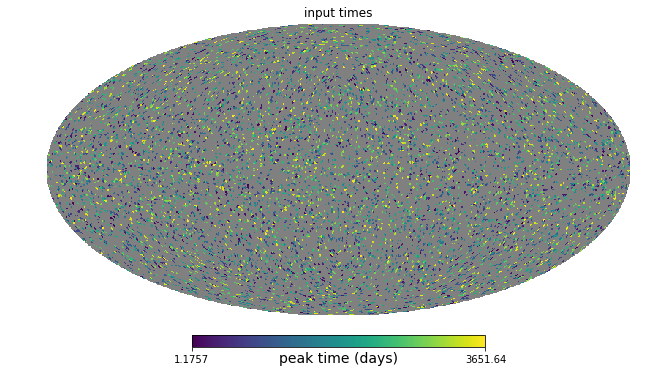

For the TDEs, we use an isotropic distribution, draw a lightcurve from a set of example lightcurves. We apply dust extinction to the curves, and check which ones get recovered.

TDE input:

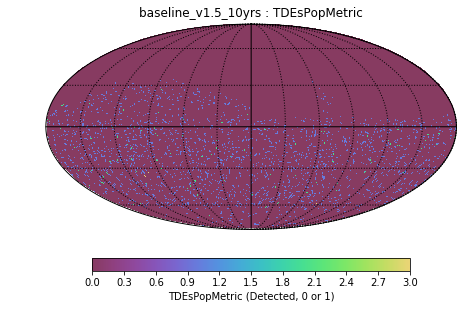

TDE recovered:

For both of these populations, I’ve set the criteria for “detected” to simply be 2 observations above the 5-sigma limiting depth pre-peak in any filter(s). The motivation is that should be enough to get a decent alert and preliminary classification so others can follow it up. We can add more detection criteria if people want to. We can also replicate this format for other transients if folks have different lightcurves and/or different spatial distributions they would like the check.

These run quite a bit faster than some other transient metrics (under a minute), but still have N=10,000, so I’m hoping that’s sufficient statistical sampling. We want to use these to help rank survey strategies, so the absolute number of events is not as important as how the fraction recovered varies.

Microlensing code: https://github.com/LSST-nonproject/sims_maf_contrib/blob/u/yoachim/microlensing/mafContrib/microlensingMetric.py

and notebook: https://github.com/LSST-nonproject/sims_maf_contrib/blob/u/yoachim/microlensing/science/Transients/Microlensing.ipynb

TDE code: https://github.com/LSST-nonproject/sims_maf_contrib/blob/u/yoachim/microlensing/mafContrib/TDEsPopMetric.py

and notebook: https://github.com/LSST-nonproject/sims_maf_contrib/blob/u/yoachim/microlensing/science/Transients/TDEsSlicer.ipynb

Tagging the possibly interested: @rstreet, @wadawson, @nsabrams, @fed, @CStubbs